The Agentic Engineering Trends Report 2026

The way software gets built has changed. We are entering the era of agentic engineering — a new operating model where engineers define the plan, AI agents execute large portions of the work, and humans review, refine, secure, and orchestrate the system. This report provides a comprehensive guide for SaaS CEOs and engineering leaders on how to adopt agentic engineering practices, backed by data from Anthropic, JetBrains, Stack Overflow, McKinsey, GitHub, and surveys of over 25,000 developers.

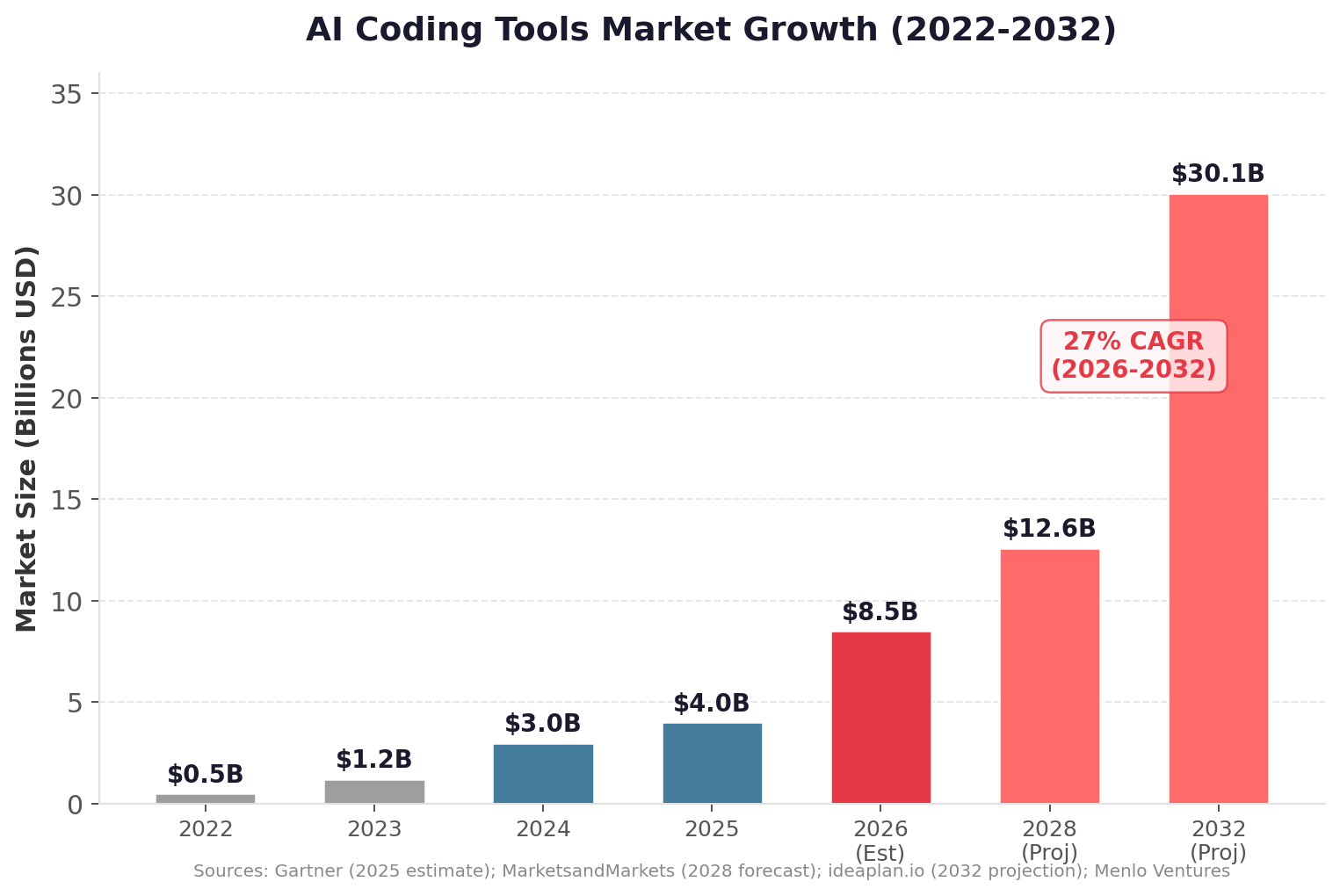

The numbers are striking: 90% of developers now use AI coding tools, 41% of all code is AI-generated, and agentic coding tools like Claude Code grew 6x in workplace adoption in under 12 months. The AI coding tools market hit $8.5 billion in 2026 and is projected to reach $30 billion by 2032. The companies that master this transition will ship faster, learn faster, and compound faster than those still working in the old model.

This report is published by SaasRise, the #1 mastermind community for SaaS CEOs with $1M–$100M+ in ARR. Members have collectively raised $1B+ and have $3B+ in ARR.

The State of AI-Powered Engineering (2026)

📋 Table of Contents

- The Agentic Engineering Paradigm Shift

- The Data: AI Coding Adoption Has Hit Escape Velocity

- The AI Coding Tool Landscape (2026)

- From Coders to AI-Native Engineering Orchestrators

- Leadership Must Go AI-First

- Shift Developers from Coders to Planners

- Invest Properly in AI Tools and Tokens

- Build Agent Systems, Not Just Prompts

- Set Up Agents and Subagents the Right Way

- Keep Agent Lifecycles Short-Lived

- Build a Real Knowledge Base for Your Agents

- Use Iteration, Not One-Shot Coding

- Make Security a First-Class Agent Workflow

- Structure Repositories for AI Readability

- Redefine Engineering Metrics for the Agentic Era

- Balance AI-Native Talent with Senior Judgment

- Compress Timeline Expectations

- Create Weekly AI Engineering Practice Sessions

- The Recommended Agentic Engineering Stack

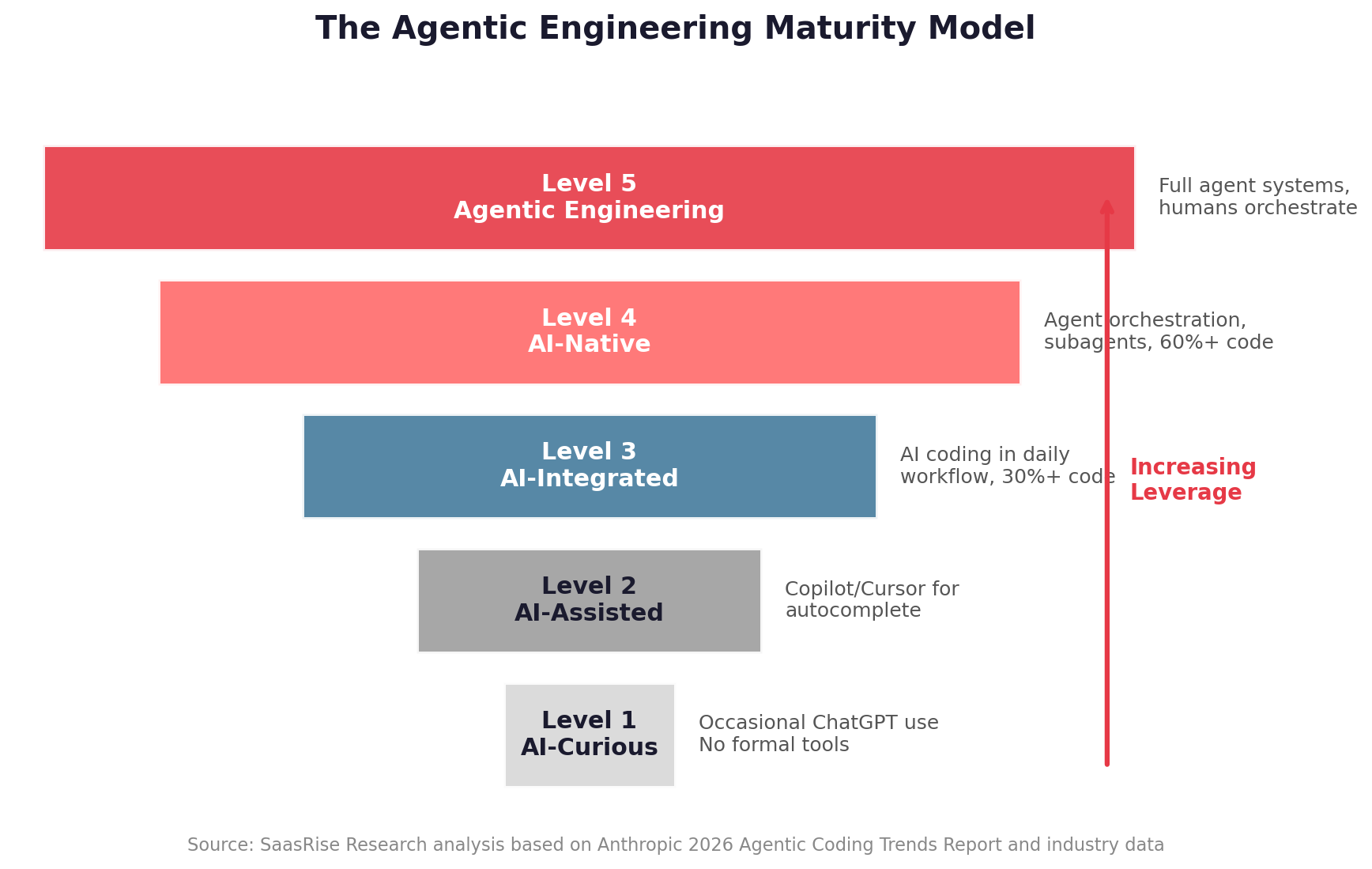

- The Agentic Engineering Maturity Model

- Conclusion: The New Engineering Operating System

1. The Agentic Engineering Paradigm Shift

For decades, engineering productivity was constrained by the speed at which humans could write, review, test, and ship code. That world is ending. We are now entering the era of agentic engineering: a new operating model where engineers define the plan, AI agents execute large portions of the work, and humans review, refine, secure, and orchestrate the system.

This is not "using ChatGPT to help with code." This is a deeper shift in how engineering organizations operate. The world of agentic engineering runs on tools like Claude Code, Cursor, OpenAI Codex, and VS Code with AI extensions — or even directly in the terminal.

Since Claude Opus 4.5 launched in November 2025 — and continued through Opus 4.6 (February 2026) and the current Opus 4.7 (April 2026) — Claude has become the default way most production code gets written at AI-native companies. Anthropic describes Claude Code as an agentic coding system that reads your codebase, makes changes across files, runs tests, and delivers committed code.

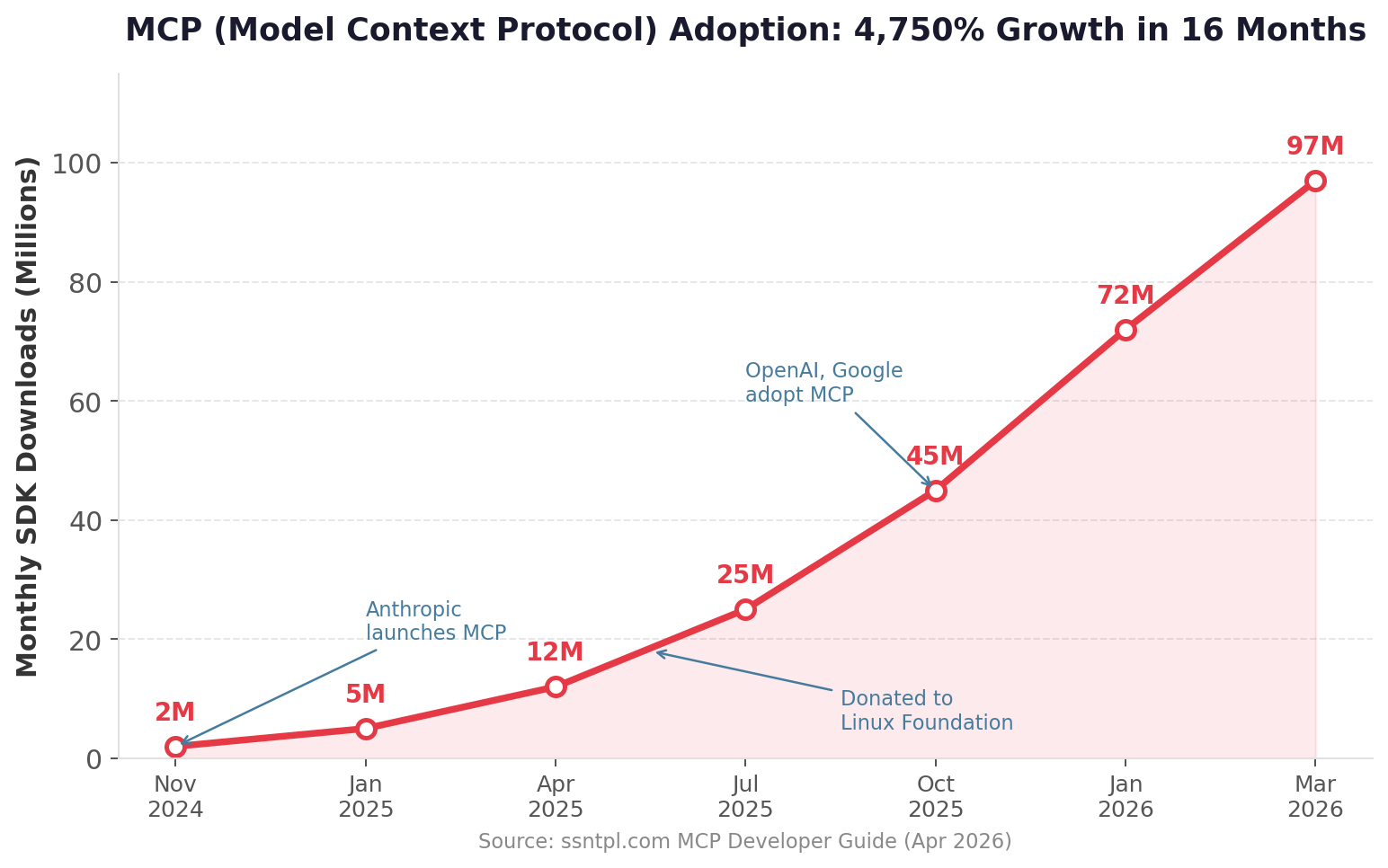

Cursor positions its agents as a way to "turn ideas into code" while developers focus on decisions and architecture. MCP, the Model Context Protocol, has emerged as an open standard for connecting AI applications to tools, databases, files, and workflows. In December 2025, Anthropic donated MCP to the Linux Foundation's Agentic AI Foundation, and it has since been adopted by OpenAI, Google, and most major AI coding tools.

The teams that master agentic engineering will ship faster, learn faster, and compound faster than competitors still working in the old model. The companies that don't will wonder why their competitors suddenly seem to have twice the engineering team.

2. The Data: AI Coding Adoption Has Hit Escape Velocity

The shift to AI-powered engineering is no longer theoretical. Every major developer survey in 2025–2026 tells the same story: adoption has crossed the tipping point and is accelerating.

Key Adoption Data Points

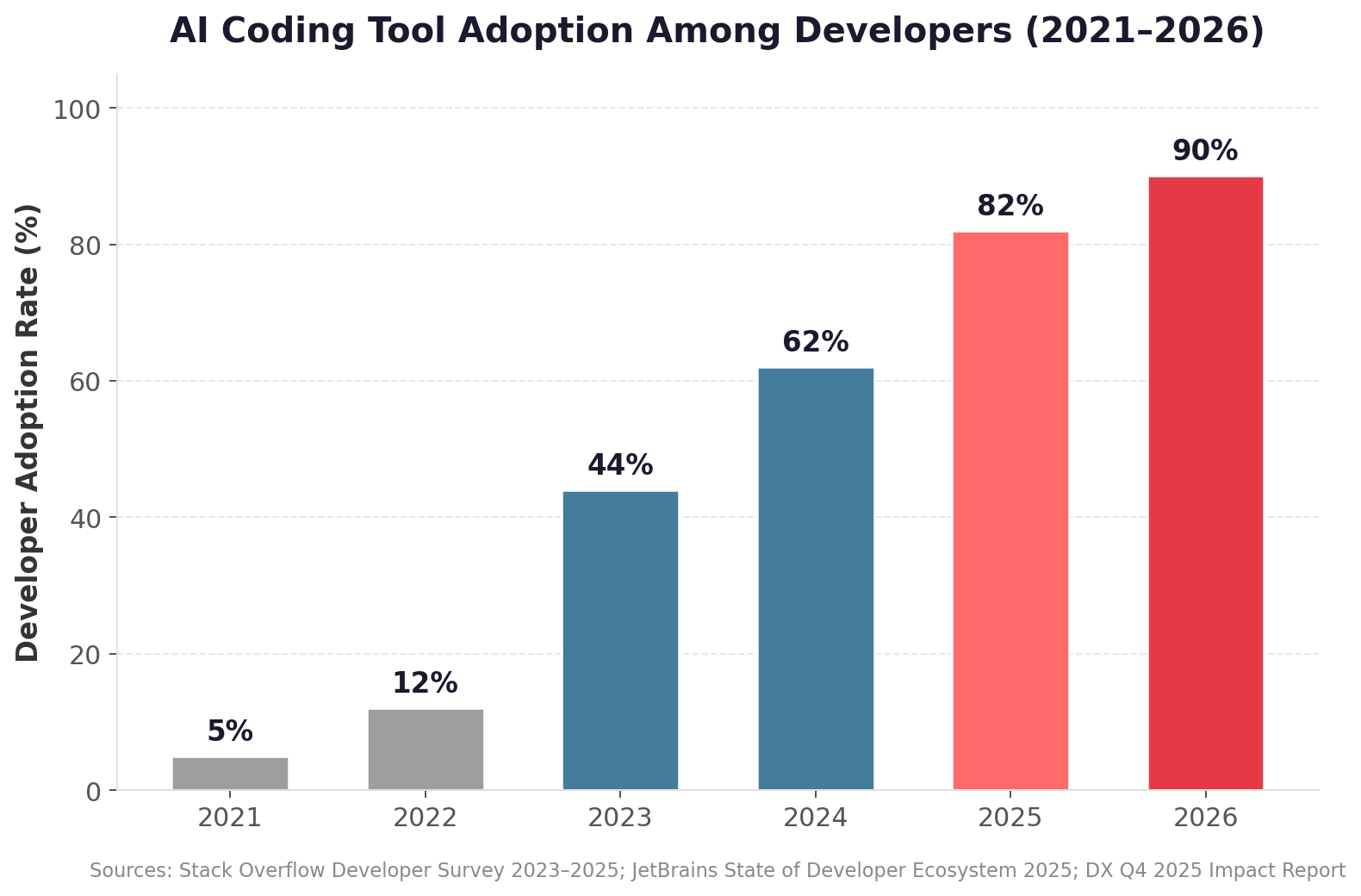

- 90% of developers now use at least one AI coding tool, up from just 5% when GitHub Copilot launched in technical preview in 2021. (UVik 2026 Usage Report)

- 84% of developers use or plan to use AI tools in their development process, with 51% using AI tools daily. (Stack Overflow 2025 Developer Survey)

- 85% of developers regularly use AI tools for coding and development. (JetBrains State of Developer Ecosystem 2025)

- 91% AI adoption across a sample of 135,000+ developers. (DX Q4 2025 Impact Report)

The Rise of AI-Generated Code

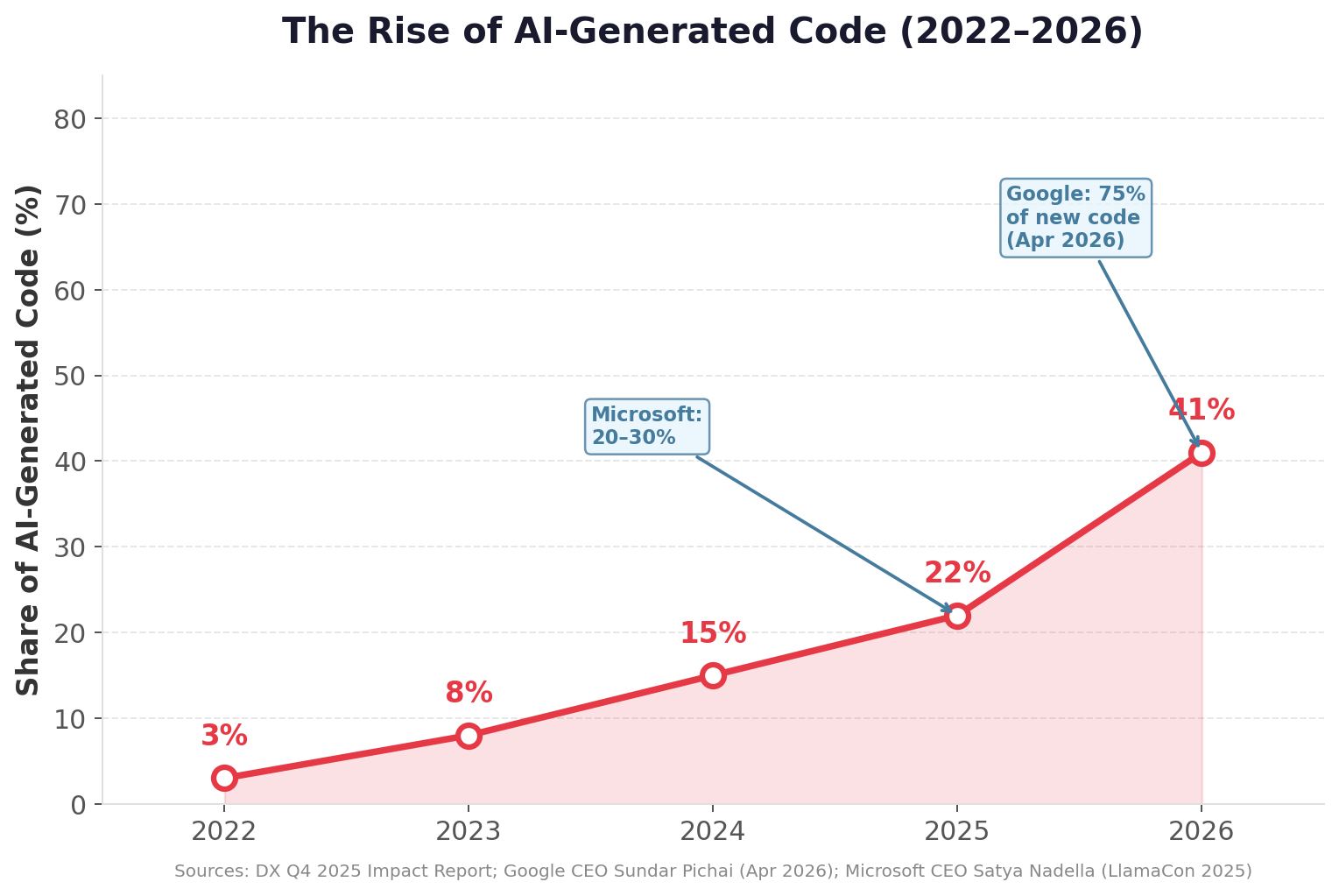

Perhaps the most striking trend is the rapid increase in the percentage of code that is AI-generated. What was once a novelty is now a production reality at the world's largest technology companies.

| Company | AI-Generated Code Share | Source |

|---|---|---|

| 75% of new code | CEO Sundar Pichai, April 2026 | |

| Microsoft | 20–30% of active projects | CEO Satya Nadella, LlamaCon 2025 |

| GitHub Copilot users | 46% of code written | GitHub Copilot Telemetry |

| Industry average | 41% of all code | Netcorp 2026 Statistics |

| DX developer sample | 22% of merged code | DX Q4 2025 Impact Report (n=135,000) |

Productivity Gains Are Real — But Nuanced

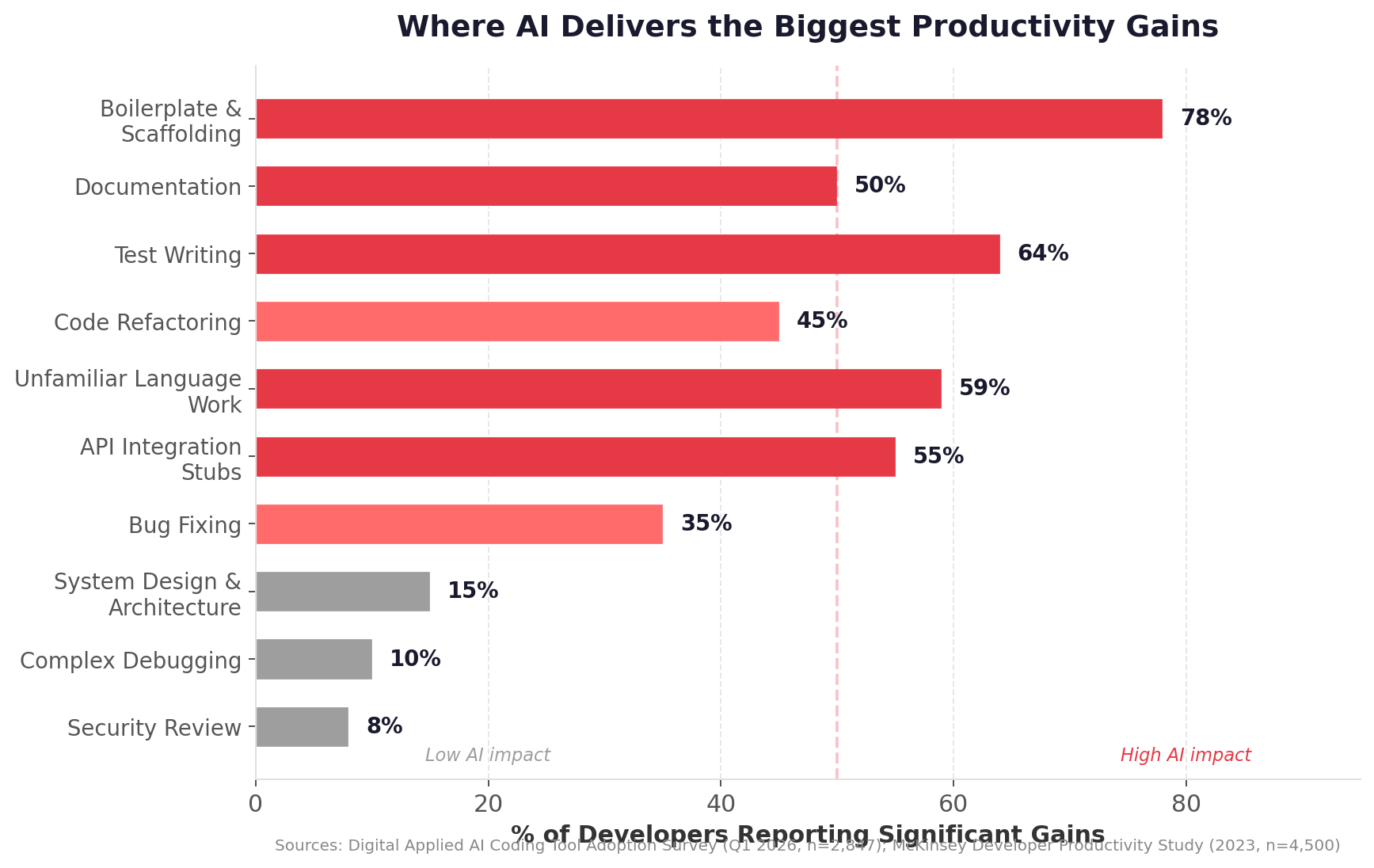

The headline finding from GitHub's controlled experiment — a 55.8% faster task completion rate with Copilot — captures attention, but the full picture is more nuanced. McKinsey's study of 4,500 developers found AI tools reduce time on routine coding tasks by 46%, with documentation completed in half the time and code refactoring in nearly two-thirds the time.

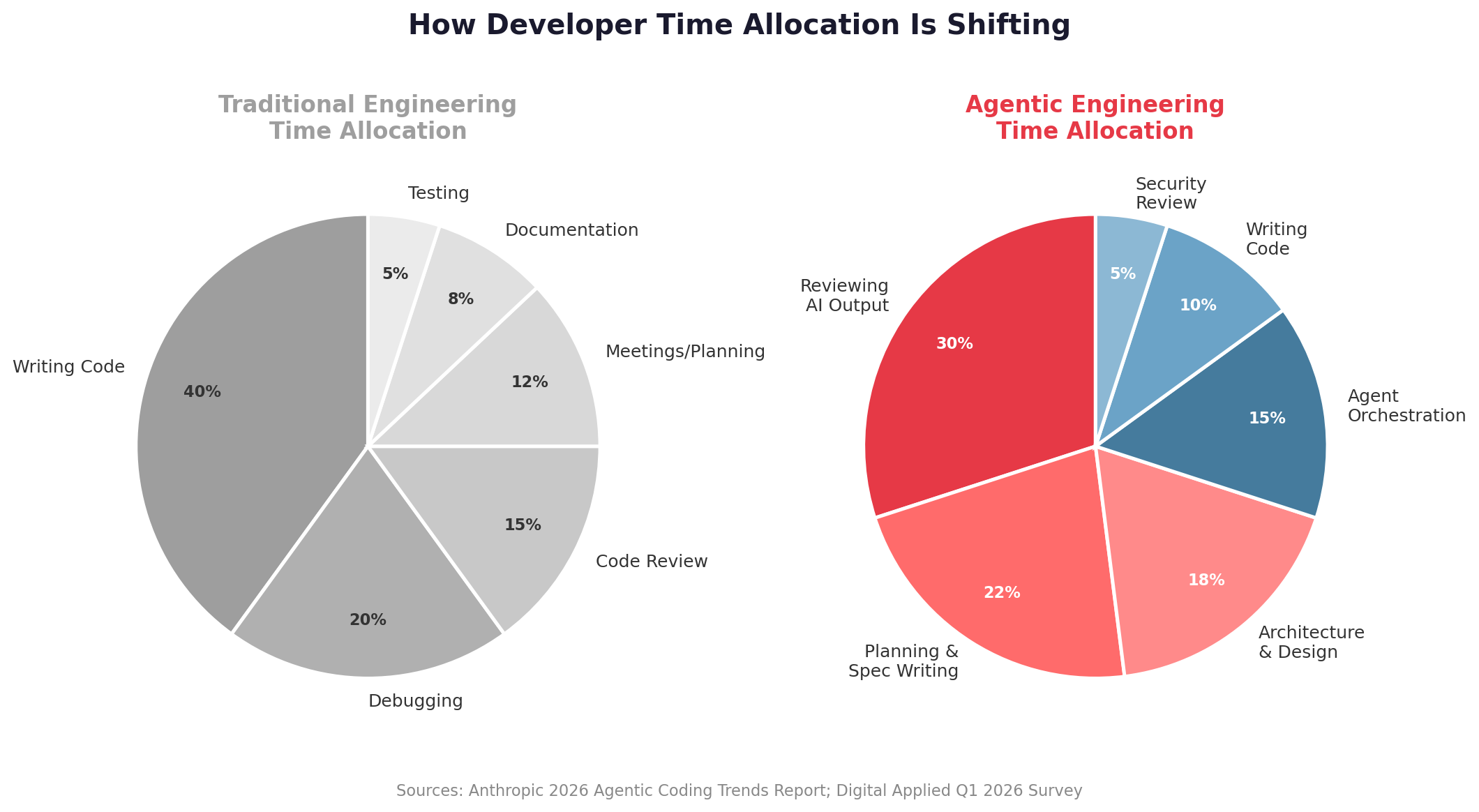

Anthropic's own 2026 Agentic Coding Trends Report found that while engineers use AI in roughly 60% of their work, they report being able to "fully delegate" only 0–20% of tasks. Internal research at Anthropic reveals that engineers report a net decrease in time spent per task category, but a much larger net increase in output volume. Notably, about 27% of AI-assisted work consists of tasks that wouldn't have been done otherwise — scaling projects, building internal tools, and fixing "papercuts" that improve code quality.

DX's analysis of 135,000+ developers found an average of 3.6 hours saved per week per developer, with daily AI users merging 60% more pull requests than non-users. The Digital Applied Q1 2026 survey (n=2,847) found a median +34% productivity gain in the first 60 days, which plateaus to +37% at 180 days.

The productivity paradox: Review time now exceeds writing time. Developers report spending 11.4 hours per week reviewing AI-generated code versus 9.8 hours writing new code — a reversal of the 2024 pattern. As one developer survey put it: "When the AI produces more code than a developer can meaningfully review, teams either merge under-reviewed work or queue PRs indefinitely."

3. The AI Coding Tool Landscape (2026)

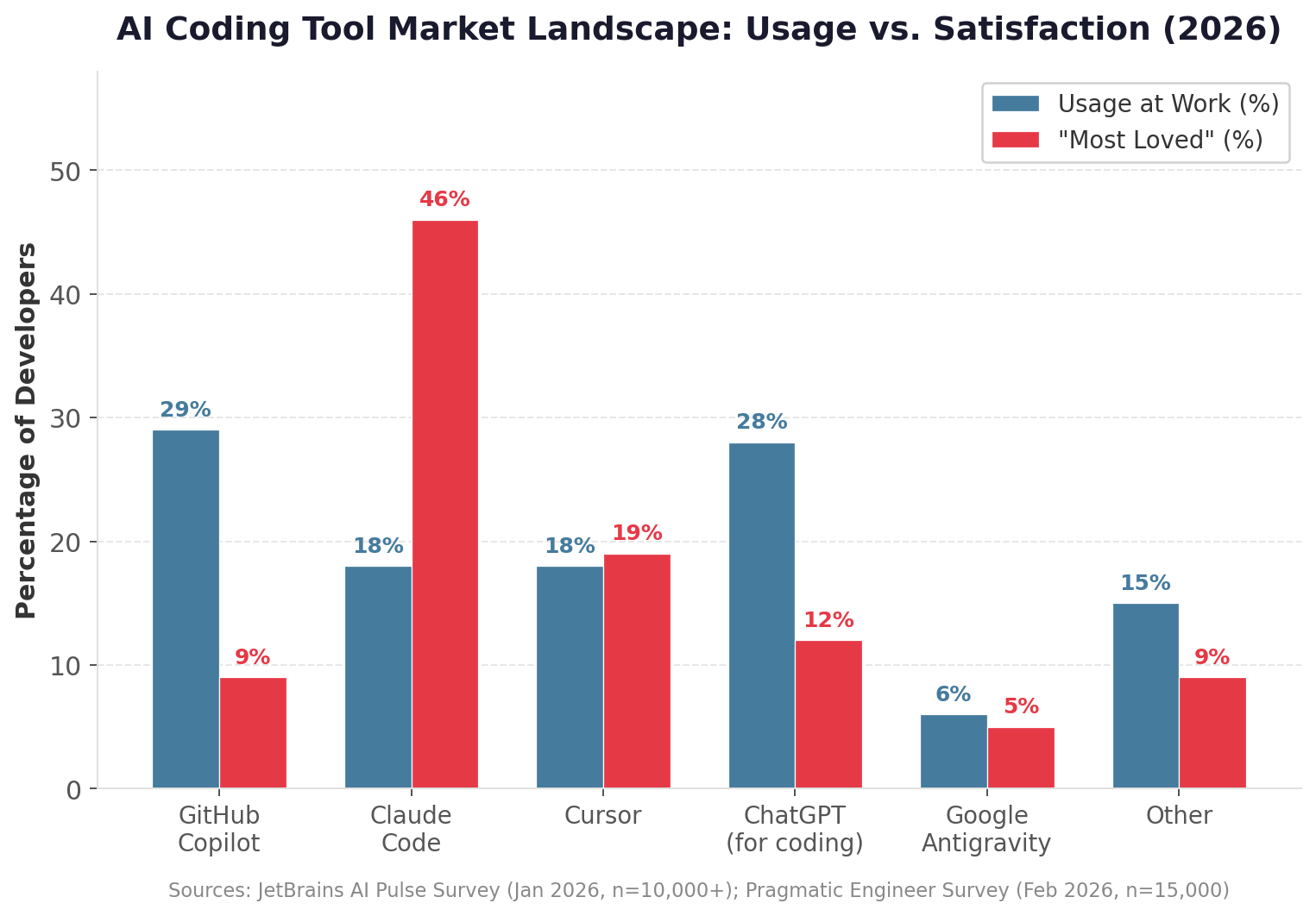

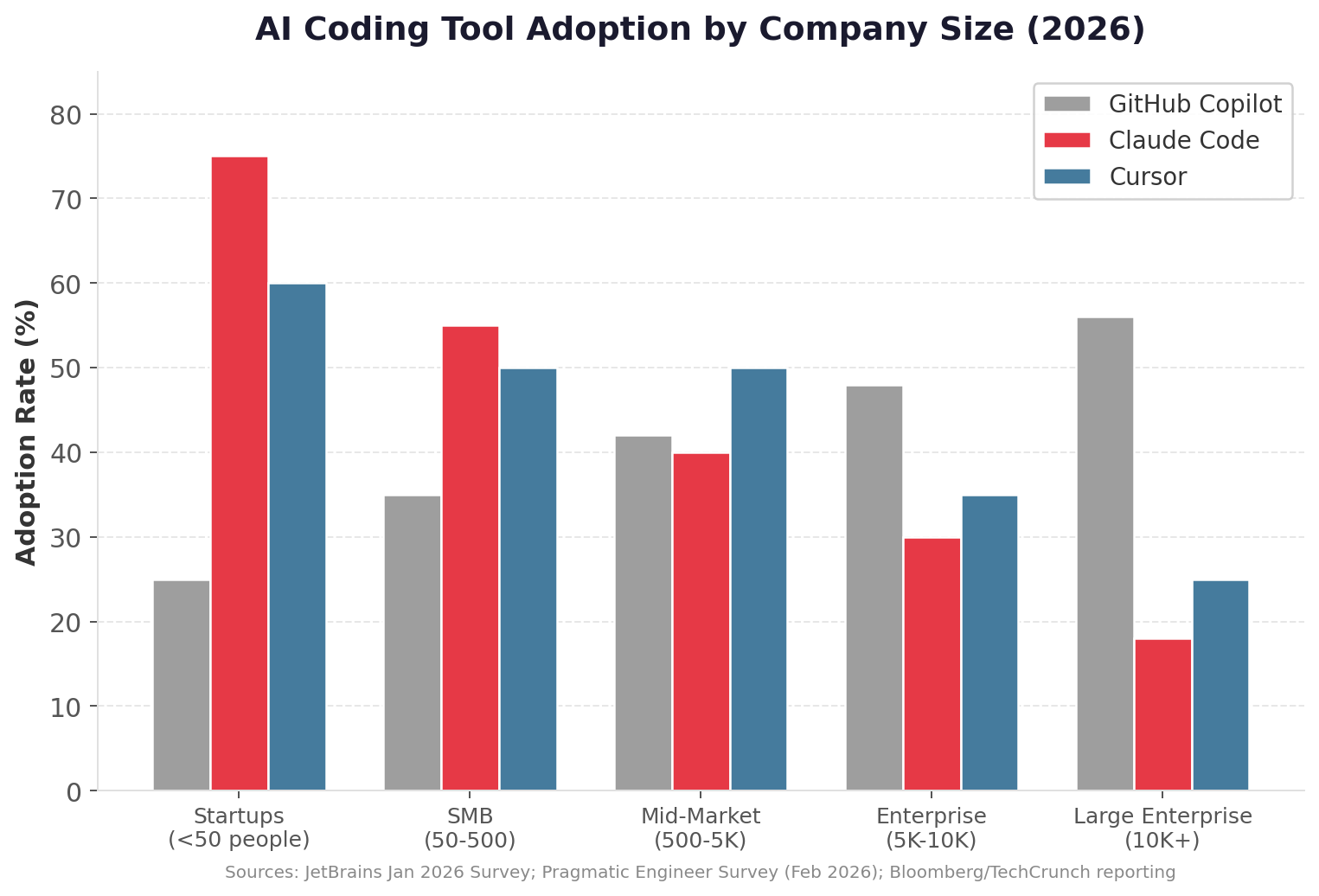

The AI coding tool market has matured into a three-way race between GitHub Copilot, Claude Code, and Cursor — each winning different segments and use cases. The defining behavior of 2026 is tool stacking: 70% of engineers use 2–4 AI coding tools simultaneously.

| Tool | Usage at Work | "Most Loved" | ARR | Key Strength |

|---|---|---|---|---|

| GitHub Copilot | 29% | 9% | — | Widest IDE support, enterprise compliance, 4.7M paid subscribers |

| Claude Code | 18% | 46% | ~$2.5B (est.) | Deepest codebase understanding, 91% CSAT, 75% startup adoption |

| Cursor | 18% | 19% | $2B | Best IDE workflow, 72% autocomplete acceptance, fastest SaaS revenue ever |

| OpenAI Codex | — | — | — | GPT-5.x-Codex models, CLI + IDE + web, native computer use |

Sources: JetBrains AI Pulse Survey (Jan 2026, n=10,000+); Pragmatic Engineer Survey (Feb 2026, n=15,000); ideaplan.io (Apr 2026).

The Explosive Growth of AI Coding Revenue

No market segment illustrates the velocity of the agentic engineering shift better than the revenue growth of AI coding tools. Cursor's trajectory is the fastest in SaaS history — from $40M ARR in August 2024 to $2B ARR in February 2026, with revenue doubling in just three months from $1B to $2B.

4. From Coders to AI-Native Engineering Orchestrators

The core change is simple: engineers are moving from writing code line-by-line to designing plans, systems, and review loops that AI agents execute.

In the old model, developers wrote most of the code manually. In the new model, developers increasingly become:

- Product interpreters — translating business requirements into technical specifications

- Technical planners — breaking work into agent-executable units

- System architects — designing systems that AI can safely modify

- Agent orchestrators — coordinating multiple AI agents working in parallel

- Code reviewers — validating AI-generated output for correctness and quality

- Security reviewers — ensuring AI-generated code doesn't introduce vulnerabilities

The goal for modern engineering teams should be bold: 80–90% of routine implementation code should eventually be AI-generated, with humans owning architecture, judgment, review, and risk. That does not mean developers become less important. It means the best developers become dramatically more leveraged.

5. Leadership Must Go AI-First

Agentic engineering transformation starts at the top. If the CTO, VP Engineering, or senior technical leadership is skeptical, the culture will not change fast enough. AI adoption cannot be treated as a side experiment. It has to become as fundamental as using Git, Slack, or a laptop.

The Leadership Message Should Be Clear

Using AI is not optional. It is now part of the job.

- Every engineer is expected to use AI coding tools daily

- Engineering managers track adoption and output

- Teams share workflows and prompt patterns

- Top AI-native engineers are celebrated and rewarded

- Skeptical senior engineers are coached, not ignored

This is a cultural transformation first and a tooling transformation second. The companies that win will not just buy AI tools — they will rebuild engineering culture around them.

6. Shift Developers from Coders to Planners

The highest-leverage AI-native engineer is not the person who types the fastest. It is the person who can create the clearest plan.

Agentic engineering works best when engineers produce detailed specifications before execution. A good plan should define:

- The business goal — What problem are we solving?

- The user behavior — How will this be used?

- The affected files and systems — What's the blast radius?

- The constraints — Performance, compatibility, backward compatibility

- The edge cases — What could go wrong?

- The testing requirements — How do we verify correctness?

- The rollback plan — How do we undo this safely?

- The security considerations — What attack surfaces does this create?

- The success criteria — How do we know we're done?

The old bottleneck was: "Can we code this?"

The new bottleneck is: "Can we describe this precisely enough that agents can build it safely?"

This is why strong product thinking, system design, and written communication are becoming core engineering skills. In an AI-native engineering organization, specs are not bureaucracy. Specs are leverage.

7. Invest Properly in AI Tools and Tokens

Most teams are underinvesting. They buy a $20/month AI tool and think they are doing AI transformation. That is not enough.

If AI can make a $150,000/year engineer 2×, 5×, or even 10× more productive on certain types of work, then spending hundreds or even thousands of dollars per month on AI compute can be a rational investment.

| Investment Level | Monthly Cost | Typical Setup | Expected Productivity Gain |

|---|---|---|---|

| Basic | $0–20 | Free tiers, occasional ChatGPT | 5–10% |

| Standard | $20–40 | Copilot or Claude Pro | 20–30% |

| Professional | $100–200 | Claude Max + Cursor Pro | 35–45% |

| Enterprise | $200–500+ | Multi-agent workflows, custom tooling | 45–60% |

Anthropic's own published estimates for Claude Code sit at $100–$200 per developer per month for enterprise deployments, with an average of around $6 per active day and 90% of users staying below $12 per active day. (Verdent AI, March 2026)

DX reports that developers save an average of 3.6 hours per week. For a developer earning $75/hour, that's $1,080/month in recovered productivity. The most expensive individual AI tool setup (Cursor + Claude Code Max) costs ~$220/month — a 4.9x return.

Do not optimize for the lowest AI bill. Optimize for shorter cycle times, more shipped product, fewer blocked engineers, better test coverage, faster iteration, and higher engineering throughput. AI spend is no longer just software spend. It is engineering capacity spend.

8. Build Agent Systems, Not Just Prompts

The real unlock is not better prompts. The real unlock is agent systems.

A prompt is a one-off instruction. An agent system is a repeatable workflow where AI can understand context, perform a task, check its work, hand off to another agent, and operate within constraints. Modern teams should think in terms of:

Planning Agents

Break requirements into scoped implementation tasks with clear acceptance criteria.

Coding Agents

Write implementation code across files based on specs and architectural guidance.

Test-Writing Agents

Generate unit, integration, and end-to-end tests for new and existing code.

Refactoring Agents

Improve code quality, extract patterns, reduce duplication without changing behavior.

Debugging Agents

Reproduce bugs, trace root causes, and propose targeted fixes.

Security Review Agents

Scan for OWASP vulnerabilities, secrets exposure, and unsafe patterns.

Documentation Agents

Keep README files, API docs, and architecture guides current.

Release-Readiness Agents

Verify PRs are complete, tests pass, and deploy prerequisites are met.

Claude Code can work across files and tools — and as of Opus 4.6, supports running agent teams in parallel. Cursor's team analytics let leaders track AI usage, adoption, and team-level productivity patterns. The winning pattern is not "one AI does everything." The winning pattern is "many focused agents, coordinated by humans and orchestration systems."

9. Set Up Agents and Subagents the Right Way

This is where agentic engineering becomes an operating system. The best teams use an orchestrating agent as the central coordinator. The orchestrator understands the plan, breaks it into work units, and dispatches specialized subagents to execute specific parts.

Think of the orchestrator as an AI technical project manager. Its job is to:

- Read the spec and break the work into phases

- Decide which subagent should handle each task

- Manage handoffs between agents

- Track progress across parallel work streams

- Summarize results and escalate uncertainty back to humans

What Makes a Good Agent?

An agent is essentially:

A model + a role + a focused instruction set + access to tools + access to context

- Frontend component agent — builds UI components within design system constraints

- Backend API agent — implements endpoints following existing patterns

- Database migration agent — writes and validates schema changes

- Test generation agent — creates tests matching project conventions

- OWASP security review agent — scans for the top 10 web vulnerabilities

- PR summary agent — generates clear descriptions for code review

The more focused the agent, the better it performs. Do not ask one agent to "build the whole product." Ask specialized agents to handle their domain — that is how you get reliability.

10. Keep Agent Lifecycles Short-Lived

Long-running agents tend to drift. They accumulate too much context, lose focus, and start making assumptions. Short-lived agents perform better.

The Ideal Agent Pattern

1. Spin up the agent → 2. Give it a narrow task → 3. Provide only the context it needs → 4. Have it complete the task → 5. Review the output → 6. Shut it down

In practice, many teams run 3–4 terminal-based agents simultaneously, each working on a different scoped task. This gives you parallel execution without chaos. The key is that every agent needs boundaries — no agent should be allowed to roam freely across the codebase without understanding the blast radius of its changes.

11. Build a Real Knowledge Base for Your Agents

Agents are only as good as their context. If your docs are outdated, your repos are messy, and your architecture is unclear, AI will amplify that mess.

Every serious AI-native engineering organization needs a strong internal knowledge system that includes: product specs, architecture docs, API documentation, database schema docs, coding standards, security standards, deployment processes, known anti-patterns, past incident summaries, and customer-specific requirements.

This is where RAG systems, vector databases, documentation indexes, and MCP servers become critical. MCP is especially relevant because it gives AI systems a standard way to connect to external tools and data sources. Originally introduced by Anthropic in late 2024 and now governed by the Linux Foundation, MCP defines an open standard for secure, two-way connections between data sources and AI-powered tools — and it has been adopted across the industry, including by OpenAI and Google. (ssntpl.com MCP Developer Guide, April 2026)

The long-term direction is clear: Your company's internal knowledge base becomes the memory layer for your engineering agents. The better your documentation, the better your agents perform.

12. Use Iteration, Not One-Shot Coding

Never trust a one-shot AI output. This is one of the most important principles of agentic engineering. AI is excellent at generating plausible code. But plausible is not the same as correct.

High-performing teams run code through multiple passes:

- Planning pass — Define the approach and constraints

- Architecture review pass — Validate the design against system requirements

- Implementation pass — Generate the initial code

- Test generation pass — Create comprehensive tests

- Refactor pass — Improve code quality and readability

- Security review pass — Check for vulnerabilities and attack surfaces

- Human final review pass — Expert judgment on correctness and completeness

A good rule of thumb: important work should go through 3–7 iterations before it ships. AI should generate. AI should critique. AI should test. AI should revise. Humans should review and own the final decision.

13. Make Security a First-Class Agent Workflow

AI-generated code can move fast — that's the good news. AI-generated code can also introduce subtle security vulnerabilities — that's the bad news. Security cannot be bolted on at the end.

Research shows that AI-coauthored PRs have ~1.7x more issues than human-only PRs. (DX Q4 2025 Impact Report) This makes dedicated security review agents essential.

Every AI-native engineering workflow should include dedicated security review agents that check for:

Authentication & Authorization

Flaws in login, session management, and access control.

Injection Attacks

SQL injection, XSS, CSRF, and command injection vulnerabilities.

Data Exposure

Secrets in code, insecure direct object references, data leaks.

LLM-Specific Risks

Prompt injection, data poisoning, and model manipulation risks.

OWASP remains one of the most useful standards here. The OWASP Top 10 is widely used for web application security, and OWASP also maintains a Top 10 for Large Language Model Applications, which has become essential reading for any team shipping LLM features.

Tools like Qodo (formerly CodiumAI, rebranded September 2024) support AI code review, quality, and governance workflows, with Qodo 2.0 releasing in February 2026 featuring a multi-agent code review architecture.

The principle is simple: The faster you generate code, the more disciplined your review system must become.

14. Structure Repositories for AI Readability

Most codebases were built for humans. The next generation of codebases will be built for humans and agents. That means repositories need:

- Clear folder structures with consistent naming conventions

- Strong README files that explain architecture and key decisions

- Good inline documentation — agents use comments as context

- Explicit architectural boundaries between modules

- Predictable test patterns that agents can follow

- Clear dependency maps and small, understandable modules

Agents perform better when the repo is structured well. Messy repos create messy AI outputs. This is why engineering leaders should treat documentation and architecture hygiene as productivity infrastructure. It is not "extra work." It is what allows AI agents to safely move faster.

15. Redefine Engineering Metrics for the Agentic Era

If you are adopting agentic engineering, your metrics need to change. Old metrics like story points and tickets closed are not enough.

| Metric Category | Traditional Metrics | Agentic Engineering Metrics |

|---|---|---|

| Output | Lines of code, story points | Features shipped, AI-assisted code %, agent runs/week |

| Velocity | Tickets closed per sprint | PR cycle time, time from idea to production |

| Quality | Bug count | Defect rates, rollback frequency, test coverage delta |

| Efficiency | Hours worked | Token usage by team, cost per shipped feature |

| Adoption | N/A | AI adoption by engineer, agent utilization, tool stacking patterns |

| Security | Annual pen test | Security findings per PR, automated scan pass rate |

Cursor Enterprise gives administrators access to comprehensive analytics dashboards showing adoption rates, usage patterns by team and individual, AI-assisted code metrics, and productivity insights — with the AI Code Tracking API mapping AI-generated lines back to specific git commits.

This matters because engineering leaders need to know who is actually changing their workflow. Some engineers will use AI lightly. Some will become radically more productive. You need to identify the second group, learn from them, and spread their workflows across the organization.

16. Balance AI-Native Talent with Senior Judgment

Younger developers often adapt faster to AI-native workflows. They are less attached to the old way of working and more willing to let agents write large amounts of code. But senior engineers remain essential.

AI often does not understand: production risk, legacy constraints, customer impact, security implications, system-level tradeoffs, or the blast radius of a change.

The Optimal Team Design

- AI-native speed from younger engineers who embrace agentic workflows

- Architectural judgment from senior engineers who understand system complexity

- Junior/mid-level engineers become dramatically more productive with AI

- Senior engineers become more important as reviewers, architects, and risk managers

This is not a world where experience stops mattering. It is a world where experience gets applied at a higher leverage point.

17. Compress Timeline Expectations

AI changes what "reasonable timeline" means. Many projects that used to take six months should now take six to twelve weeks. Many features that used to take weeks should now take days. Many prototypes that used to take days should now take hours.

Tools like v0 (relaunched by Vercel in early 2026 as a production-grade agentic app builder) and Lovable (full-stack React + Supabase from natural language) have made rapid prototyping radically faster.

Leaders should ask harder questions when timelines are long:

- Is the spec clear enough for agents to execute?

- Are we using agents effectively and parallelizing work?

- Are we over-engineering or waiting on humans to do work AI could draft?

- Are senior engineers reviewing instead of manually implementing?

The new expectation should be: Most software projects should be scoped, phased, and shipped far faster than they were in the pre-agentic era.

18. Create Weekly AI Engineering Practice Sessions

The tooling changes every month. The best practices change every month. The models change every month. So your team needs a learning rhythm.

Every engineering org should have a weekly or biweekly AI engineering session where developers share:

- Best prompts and agent workflows

- Failed experiments and lessons learned

- Useful MCP servers and integrations

- Security issues found in AI-generated code

- Before/after productivity examples

- Better spec templates and review loops

AI transformation is not a one-time rollout. It is an ongoing operating discipline. The teams that compound learning across the organization will pull ahead.