How to Transform Your Engineering Team with Agentic Engineering

This article breaks down a major shift happening inside engineering teams right now: the move from writing code manually to orchestrating AI agents that do it for you. The role of the engineer is evolving from coder to planner, architect, and reviewer—while tools like Claude, Cursor, and MCP handle large parts of implementation. The teams that figure this out early will move faster, ship more, and effectively multiply their engineering capacity without hiring at the same pace. This isn’t about adding AI to your workflow. It’s about rebuilding how your entire engineering system operates—and that’s where the real leverage comes from.

The way software gets built has changed.

For decades, engineering productivity was constrained by the speed at which humans could write, review, test, and ship code. That world is ending.

We are now entering the era of agentic engineering: a new operating model where engineers define the plan, AI agents execute large portions of the work, and humans review, refine, secure, and orchestrate the system.

The world of agentic engineering often runs on tools like Claude Code, Cursor, OpenAI Codex, and VS Code (with AI extensions) — or even directly in the terminal.

This is not "using ChatGPT to help with code." This is a deeper shift in how engineering organizations operate.

Since Claude Opus 4.5 launched in November 2025 — and continued through Opus 4.6 (February 2026) and the current Opus 4.7 (April 2026) — Claude has become the default way most production code gets written at AI-native companies.

Tools like Claude Code, Cursor, v0, Lovable, Qodo, and MCP-based internal systems are turning software development from a manual craft into a semi-autonomous production system. Anthropic describes Claude Code as an agentic coding system that reads your codebase, makes changes across files, runs tests, and delivers committed code.

Cursor positions its agents as a way to "turn ideas into code" while developers focus on decisions and architecture. MCP, the Model Context Protocol, has emerged as an open standard for connecting AI applications to tools, databases, files, and workflows. (In December 2025, Anthropic donated MCP to the Linux Foundation's Agentic AI Foundation, and it has since been adopted by OpenAI, Google, and most major AI coding tools.)

The teams that master this transition will ship faster, learn faster, and compound faster than competitors still working in the old model.

The companies that don't will wonder why their competitors suddenly seem to have twice the engineering team.

The Big Shift: From Coders to AI-Native Engineering Orchestrators

The core change is simple:

Engineers are moving from writing code line-by-line to designing plans, systems, and review loops that AI agents execute.

In the old model, developers wrote most of the code manually.

In the new model, developers increasingly become:

- Product interpreters

- Technical planners

- System architects

- Agent orchestrators

- Code reviewers

- Security reviewers

- Quality controllers

The best engineers will still understand code deeply. But their highest-value work will shift from "typing implementation" to "designing the work so AI can implement it correctly."

The goal for modern engineering teams should be bold:

80–90% of routine implementation code should eventually be AI-generated, with humans owning architecture, judgment, review, and risk.

That does not mean developers become less important.

It means the best developers become dramatically more leveraged.

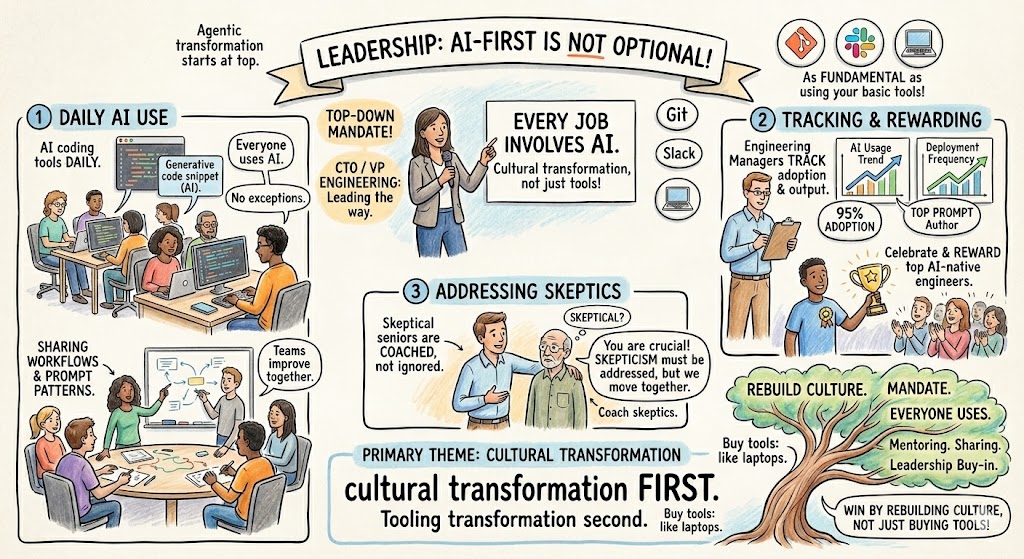

1. Leadership Must Go AI-First

Agentic engineering transformation starts at the top.

If the CTO, VP Engineering, or senior technical leadership is skeptical, the culture will not change fast enough.

AI adoption cannot be treated as a side experiment. It has to become as fundamental as using Git, Slack, Jira, GitHub, or a laptop.

The leadership message should be clear:

Using AI is not optional. It is now part of the job.

That means:

- Every engineer is expected to use AI coding tools daily

- Engineering managers track adoption and output

- Teams share workflows and prompt patterns

- Top AI-native engineers are celebrated and rewarded

- Skeptical senior engineers are coached, not ignored

This is a cultural transformation first and a tooling transformation second.

The companies that win will not just buy AI tools. They will rebuild engineering culture around them.

2. Shift Developers from Coders to Planners

The highest-leverage AI-native engineer is not the person who types the fastest.

It is the person who can create the clearest plan.

Agentic engineering works best when engineers produce detailed specifications before execution. A good plan should define:

- The business goal

- The user behavior

- The affected files and systems

- The constraints

- The edge cases

- The testing requirements

- The rollback plan

- The security considerations

- The success criteria

The old bottleneck was "Can we code this?"

The new bottleneck is "Can we describe this precisely enough that agents can build it safely?"

This is why strong product thinking, system design, and written communication are becoming core engineering skills.

In an AI-native engineering organization, specs are not bureaucracy. Specs are leverage.

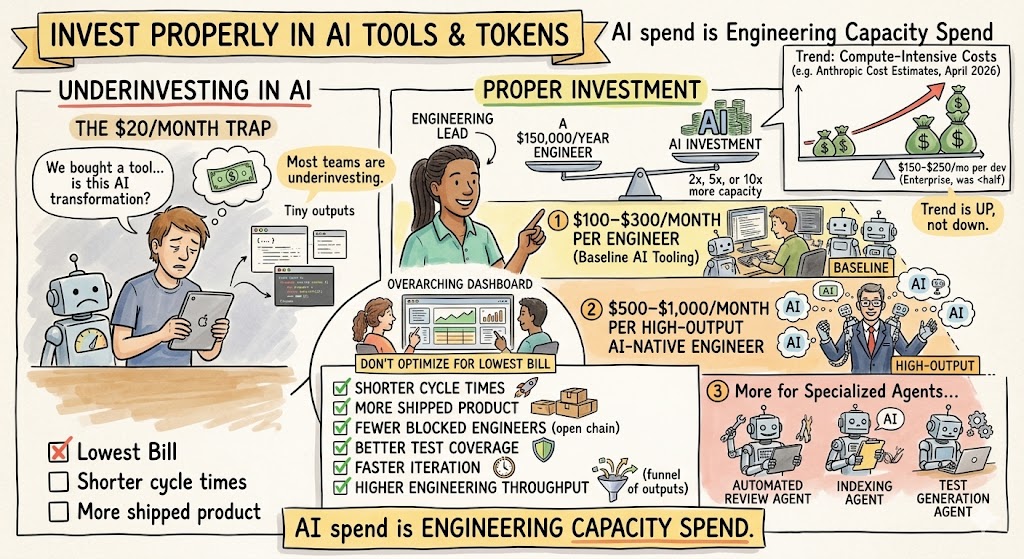

3. Invest Properly in AI Tools and Tokens

Most teams are underinvesting.

They buy a $20/month AI tool and think they are doing AI transformation.

That is not enough.

If AI can make a $150,000/year engineer 2×, 5×, or even 10× more productive on certain types of work, then spending hundreds or even thousands of dollars per month on AI compute can be a rational investment.

A serious AI engineering budget may eventually look like:

- $100–$300/month per engineer for baseline AI tooling

- $500–$1,000/month per high-output AI-native engineer

- More for specialized agents, automated review, indexing, and test generation

Anthropic's own published estimates for Claude Code now sit at $150–$250 per developer per month for enterprise deployments, with an average of around $13 per active day and 90% of users staying below $30 per active day. Notably, that monthly estimate roughly doubled in April 2026 from prior figures, reinforcing the broader point: serious agentic development is compute-intensive, and the trend is up, not down.

Do not optimize for the lowest AI bill.

Optimize for:

- Shorter cycle times

- More shipped product

- Fewer blocked engineers

- Better test coverage

- Faster iteration

- Higher engineering throughput

AI spend is no longer just software spend. It is engineering capacity spend.

4. Build Agent Systems, Not Just Prompts

The real unlock is not better prompts.

The real unlock is agent systems.

A prompt is a one-off instruction.

An agent system is a repeatable workflow where AI can understand context, perform a task, check its work, hand off to another agent, and operate within constraints.

Modern teams should think in terms of:

- Planning agents

- Coding agents

- Refactoring agents

- Test-writing agents

- Debugging agents

- Security review agents

- Documentation agents

- Release-readiness agents

Claude Code, Cursor, and similar tools are early examples of this shift. Claude Code can work across files and tools (and as of Opus 4.6 supports running agent teams in parallel), while Cursor's team analytics let leaders track AI usage, adoption, and team-level productivity patterns.

The winning pattern is not "one AI does everything."

The winning pattern is "many focused agents, coordinated by humans and orchestration systems."

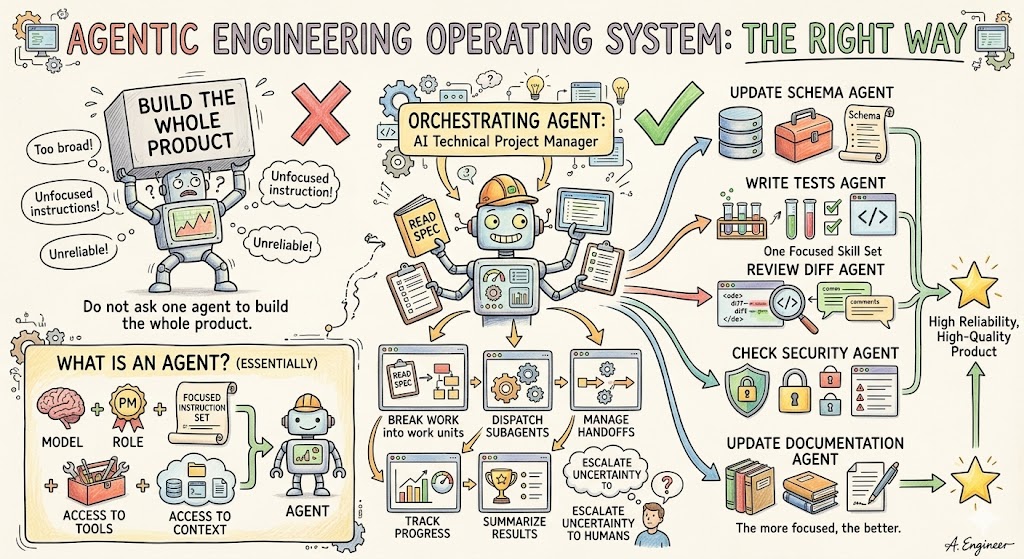

5. Set Up Agents and Subagents the Right Way

This is where agentic engineering becomes an operating system.

The best teams use an orchestrating agent as the central coordinator. The orchestrator understands the plan, breaks it into work units, and dispatches specialized subagents to execute specific parts.

Think of the orchestrator as an AI technical project manager.

Its job is to:

- Read the spec

- Break the work into phases

- Decide which subagent should handle each task

- Manage handoffs

- Track progress

- Summarize results

- Escalate uncertainty back to humans

The subagents should be much narrower.

Each subagent should have one focused skill set.

Examples:

- Frontend component agent

- Backend API agent

- Database migration agent

- Test generation agent

- Refactoring agent

- Performance review agent

- OWASP security review agent

- Documentation agent

- Pull request summary agent

The more focused the agent, the better it performs.

An agent is essentially:

A model + a role + a focused instruction set + access to tools + access to context.

Do not ask one agent to "build the whole product."

Ask one agent to update the schema. Ask another to write tests. Ask another to review the diff. Ask another to check for security vulnerabilities. Ask another to update documentation.

That is how you get reliability.

6. Keep Agent Lifecycles Short-Lived

Long-running agents tend to drift.

They accumulate too much context, lose focus, and start making assumptions.

Short-lived agents perform better.

The ideal pattern is:

- Spin up the agent

- Give it a narrow task

- Provide only the context it needs

- Have it complete the task

- Review the output

- Shut it down

This keeps the work clean and auditable.

In practice, many teams run 3–4 terminal-based agents simultaneously, each working on a different scoped task.

This gives you parallel execution without turning the system into chaos.

The key is that every agent needs boundaries.

No agent should be allowed to roam freely across the codebase without understanding the blast radius of its changes.

7. Build a Real Knowledge Base for Your Agents

Agents are only as good as their context.

If your docs are outdated, your repos are messy, and your architecture is unclear, AI will amplify that mess.

Every serious AI-native engineering org needs a strong internal knowledge system.

This should include:

- Product specs

- Architecture docs

- API documentation

- Database schema docs

- Coding standards

- Security standards

- Deployment processes

- Known anti-patterns

- Past incident summaries

- Customer-specific requirements

This is where RAG systems, vector databases, documentation indexes, and MCP servers become important.

MCP is especially relevant because it gives AI systems a standard way to connect to external tools and data sources. Originally introduced by Anthropic in late 2024 and now governed by the Linux Foundation, MCP defines an open standard for secure, two-way connections between data sources and AI-powered tools — and it has been adopted across the industry.

The long-term direction is clear:

Your company's internal knowledge base becomes the memory layer for your engineering agents.

8. Use Iteration, Not One-Shot Coding

Never trust a one-shot AI output.

This is one of the most important principles of agentic engineering.

AI is excellent at generating plausible code. But plausible is not the same as correct.

High-performing teams run code through multiple passes:

- Planning pass

- Architecture review pass

- Implementation pass

- Test generation pass

- Refactor pass

- Security review pass

- Human final review pass

A good rule of thumb:

Important work should go through 3–7 iterations before it ships.

This is how you turn AI from a clever assistant into a reliable engineering production system.

AI should generate. AI should critique. AI should test. AI should revise. Humans should review and own the final decision.

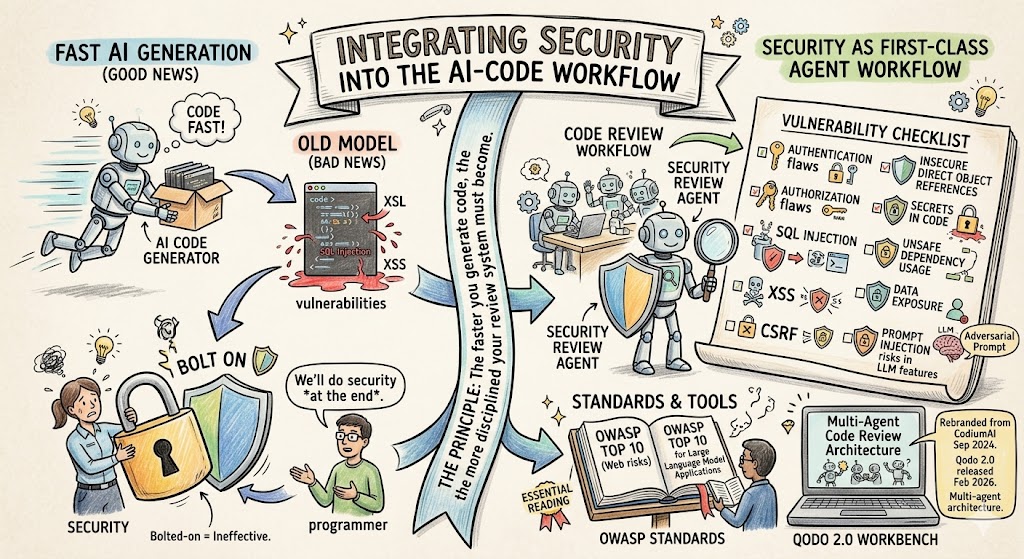

9. Make Security a First-Class Agent Workflow

AI-generated code can move fast. That is the good news.

AI-generated code can also introduce subtle security vulnerabilities. That is the bad news.

Security cannot be bolted on at the end.

Every AI-native engineering workflow should include dedicated security review agents.

These agents should check for:

- Authentication flaws

- Authorization flaws

- SQL injection

- XSS

- CSRF

- Insecure direct object references

- Secrets in code

- Unsafe dependency usage

- Data exposure

- Prompt injection risks in LLM features

OWASP remains one of the most useful standards here. The OWASP Top 10 is widely used as an awareness document for the most critical web application security risks, and OWASP also maintains a Top 10 for Large Language Model Applications, which has become essential reading for any team shipping LLM features.

Tools like Qodo can also support AI code review, code quality, and governance workflows. Qodo (formerly known as CodiumAI / Codium, which rebranded in September 2024) released Qodo 2.0 in February 2026 with a multi-agent code review architecture.

The principle is simple:

The faster you generate code, the more disciplined your review system must become.

10. Structure Repositories for AI Readability

Most codebases were built for humans.

The next generation of codebases will be built for humans and agents.

That means repositories need:

- Clear folder structures

- Consistent naming conventions

- Strong README files

- Good inline documentation

- Explicit architectural boundaries

- Predictable test patterns

- Clear dependency maps

- Small, understandable modules

Agents perform better when the repo is structured well.

Messy repos create messy AI outputs.

This is one reason engineering leaders should treat documentation and architecture hygiene as productivity infrastructure. It is not "extra work." It is what allows AI agents to safely move faster.

11. Redefine Engineering Metrics

If you are adopting agentic engineering, your metrics need to change.

Old metrics like story points and tickets closed are not enough.

New metrics should include:

- AI adoption by engineer

- AI-assisted code percentage

- Agent runs per week

- Token usage by team

- Cost per shipped feature

- PR cycle time

- Test coverage changes

- Defect rates

- Security findings

- Rollbacks

- Time from idea to production

Cursor Enterprise, for example, gives administrators access to comprehensive analytics dashboards showing adoption rates, usage patterns by team and individual, AI-assisted code metrics, and productivity insights — and the AI Code Tracking API on Enterprise plans maps AI-generated lines back to specific git commits.

This matters because engineering leaders need to know who is actually changing their workflow.

Some engineers will use AI lightly.

Some will become radically more productive.

You need to identify the second group, learn from them, and spread their workflows across the organization.

12. Balance Younger AI-Native Talent with Senior Judgment

Younger developers often adapt faster to AI-native workflows.

They are less attached to the old way of working and more willing to let agents write large amounts of code.

But senior engineers remain essential.

Why?

Because AI often does not understand:

- Production risk

- Legacy constraints

- Customer impact

- Security implications

- System-level tradeoffs

- The blast radius of a change

The best team design pairs:

AI-native speed from younger engineers with architectural judgment from senior engineers.

Junior and mid-level engineers may become dramatically more productive with AI.

Senior engineers become more important as reviewers, architects, and risk managers.

This is not a world where experience stops mattering.

It is a world where experience gets applied at a higher leverage point.

13. Compress Timeline Expectations

AI changes what "reasonable timeline" means.

Many projects that used to take six months should now take six to twelve weeks.

Many features that used to take weeks should now take days.

Many prototypes that used to take days should now take hours.

Tools like v0 and Lovable have made rapid prototyping radically faster. v0 (now at v0.app) started as a prototyping tool and was relaunched by Vercel in early 2026 as a production-grade agentic app builder, capable of importing GitHub repos, opening PRs, and shipping full applications. Lovable positions itself as an AI app builder for apps, websites, and digital products, generating full-stack codebases (React, Supabase, Tailwind) from natural language.

This does not mean every enterprise-grade system can be rushed.

But it does mean leaders should ask harder questions when timelines are long:

- Is the spec clear enough?

- Are we using agents effectively?

- Are we parallelizing work?

- Are we over-engineering?

- Are we waiting on humans to do work AI could draft?

- Are senior engineers reviewing instead of manually implementing?

The new expectation should be:

Most software projects should be scoped, phased, and shipped far faster than they were in the pre-agentic era.

14. Create Weekly AI Engineering Practice Sessions

The tooling changes every month.

The best practices change every month.

The models change every month.

So your team needs a learning rhythm.

Every engineering org should have a weekly or biweekly AI engineering session where developers share:

- Best prompts

- Best agent workflows

- Failed experiments

- Useful MCP servers

- Security issues found

- Before/after productivity examples

- Better spec templates

- Better review loops

This is how you compound learning across the team.

AI transformation is not a one-time rollout.

It is an ongoing operating discipline.

Recommended Agentic Engineering Stack

Here is a practical stack for SaaS teams.

AI Coding

- Claude Code — Anthropic's agentic coding system, available via terminal CLI, IDE extensions (VS Code, JetBrains), desktop app, and browser; runs Opus 4.7 and Sonnet 4.6

- Cursor — AI-native IDE (a VS Code fork) with team analytics, plan mode, and parallel agents; supports models from OpenAI, Anthropic, Google, and xAI

- OpenAI Codex — OpenAI's agentic coding platform spanning CLI, IDE extensions, web, and desktop app, powered by GPT-5.x-Codex models with native computer use

- VS Code — still a foundational editor for many teams, now usually paired with AI extensions

Prototyping & App Building

- v0 (v0.app) — Vercel's AI builder; from prototype to production with GitHub integration, sandboxes, and one-click deploy

- Lovable — AI app and website builder for full-stack web apps (React + Supabase)

- Vercel — deployment and hosting for frontend/product demos

Code Review and Quality

- Qodo — AI code review and quality platform (formerly CodiumAI), with multi-agent review and pull request automation

- Custom review agents — especially for internal standards

- Human senior engineering review — still mandatory for important systems

Security

- OWASP Top 10

- OWASP Top 10 for LLM Applications

- Dedicated security review agents

- Manual penetration testing for critical systems

Context and Knowledge

- MCP servers (now governed by the Linux Foundation, supported across Anthropic, OpenAI, and Google ecosystems)

- RAG pipelines

- Vector databases such as Pinecone, Weaviate, or pgvector

- Internal architecture docs

- Repo-level knowledge indexes

Orchestration

- Terminal-based agents

- Custom internal agent runners

- CI/CD-integrated agents

- Agent workflows tied to GitHub, GitLab, Linear, Jira, Slack, and internal docs

The Real Goal: An AI-Native Engineering Culture

Agentic engineering is not about replacing developers.

It is about amplifying the best developers and changing the shape of the engineering team.

The companies that win will not be the ones that merely "allow" AI tools.

They will be the ones that rebuild the entire software development lifecycle around AI-native execution.

That means:

- AI-first leadership

- Engineers as planners and orchestrators

- Agents and subagents for execution

- Strong internal knowledge systems

- Rigorous review loops

- Security-first workflows

- Better metrics

- Faster timelines

- Continuous team learning

This is the new engineering operating system.

And the CEOs and CTOs who understand it now will have a major advantage.

Because the question is no longer:

"How many engineers do we have?"

The better question is:

"How much agentic engineering capacity can our team orchestrate?"

That is the new frontier.

And it is going to transform how every serious SaaS company builds software.